Find the minimum of an array with a non-increasing and a non-decreasing part

1 Problem

Consider an array of entries from a totally ordered set. There exist a index , such that for all , and for all .

How fast can we find such ? The worst time is . When all the elements are equal, you must transverse the entire array to figure that out.

If is the maximum time an element occurs in the array, then we can find an algorithm that solves this problem in time.

2 Algorithm

The idea is to use ternary search, which works well when all values are distinct, and go for linear search once we figure there are a lot of repeated elements.

First, we present a lemma.

If there exist a index , such that , then at least positions in the array has the same value as .

Image Credit: Vanessa

Li.

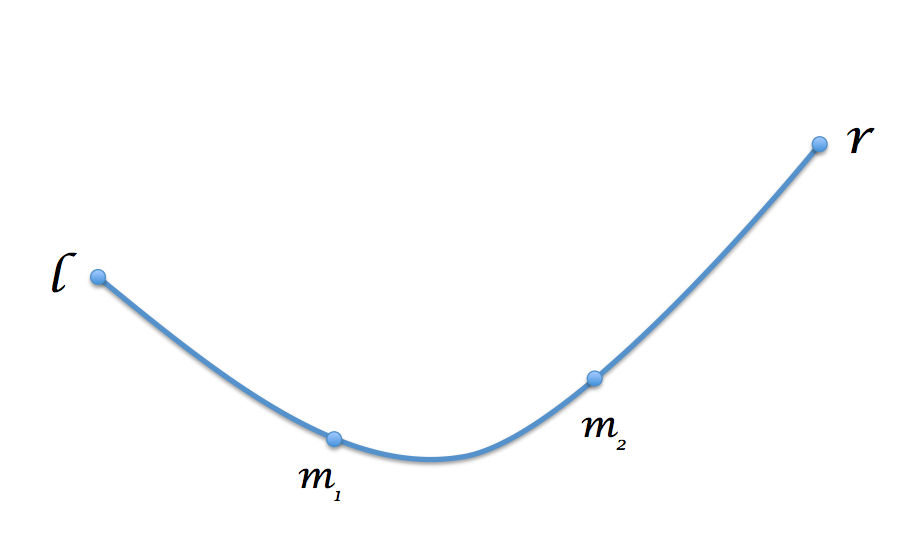

Consider we want to find the minima from index to (not including ), and . Let and such that .

- If , then we know the minima is in . Recurse.

- If , then we know the minima is in . Recurse.

- Otherwise . If or , then by the lemma, at least of the values between position and is . We can also test if , if it is, then of the values between position and is . Since there are so many repeated values, we just do a linear search for the minima.

- Finally, if all above fails, we must have some value between position and take a different value from them. It must be a smaller value, and no value smaller than can exist outside . Recurse on .

Here is the code in Go:

3 Complexity

is the time to run the algorithm on an array of length with repeats.

For larger than , the algorithm will have recursive calls, each one cost time. Once it reaches small , it will spend time on a linear search. The algorithm spends a total of time.

4 Notes

Brosef Stalin offered an alternative logic that offers cleaner code.Can we generalize this to first increase then decrease then increase arrays? One can show is best possible by considering an array with for all , and . There is no way to find with out looking over every position.